Magazine

In The Mind-Over-Matter Battle, It Is The Former That Must Reign Supreme

Prithwis Mukerjee

Aug 01, 2018, 01:43 PM | Updated 01:43 PM IST

Save & read from anywhere!

Bookmark stories for easy access on any device or the Swarajya app.

The Ghost in the Machine is a 1967 book by the noted Hungarian author, Arthur Koestler, about the mind-body problem that seeks to relate the intangible mind with a physical body. Half a century later, the rapid rise of Artificial Intelligence (AI) and the proliferation of autonomous robots, including cars, has intensified our focus in this area. Today, we seek technology that will help seamlessly connect the intangibility of knowledge, intent and desire with the physicality of a corresponding action.

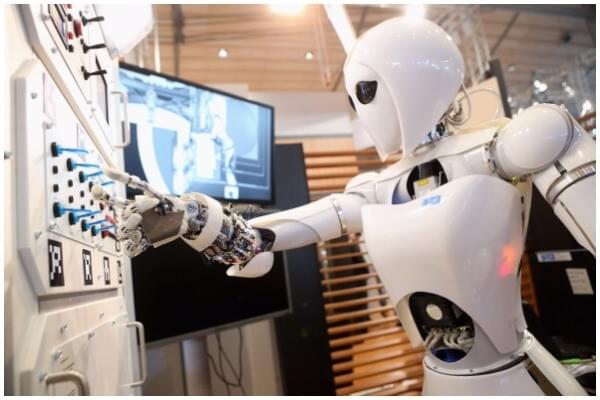

A cursory glance at the technology landscape shows us multiple strands of inquiry. First, there is vanilla artificial intelligence that is focussed on digital phenomena- like imaging, handwriting and voice recognition, language translation and strategy games such as chess and Go. Extending this is vanilla robotics, which allows us to operate complicated machine tools in applications ranging from heavy manufacturing to delicate surgical procedures. Both these strands tend to come together to create autonomous devices such as self-driving cars and data-driven decision processes. These can, or are being designed to, sense a rapidly changing environment and react in a manner that helps achieve goals initially set by human programmers and then, increasingly and somewhat disconcertingly, by these non-human devices “themselves”.

This last narrative of autonomous robots taking on a role in the affairs of human society and replacing humans not just in low-end physical work but in complex management and administrative decisions is causing increasing angst in the general population. From initial fears of large-scale unemployment to subtle disempowerment and marginalisation of humans, popular culture is rife with fears of our robotic overlords taking over the planet.

Shining a ray of hope into this otherwise dystopian darkness are two other somewhat similar streams of technology that are becoming increasingly stronger. First is bio-hacking that seeks to augment human abilities. This goes beyond the mere application of mechanical devices such as levers and pulleys to raise heavy weights or servo motors and power steering that allow humans to control big machines. What bio-hackers do is that they enhance the organs to allow a human being to, say, sense ultra-violet rays.

For another example, Radio Frequency Identification (RFID) chips are placed subcutaneously to activate doors and in extreme cases, transcranial electrical shocks are used to alter the ability of the brain to respond to emergencies.

What looks like the end-game in this body-enhancement process is the fourth and final strand of technology that hooks into the human brain to determine what its intentions are. Then it goes about executing or implementing these intentions using the non-human technology of far more powerful machines.

Thus, the ghost of an intention or desire, “residing” or originating in the brain is liberated from the restrictions imposed by the physical limitations of a biological body and is allowed to directly control and use the immense computational and electro-mechanical power of a physical machine to achieve its goals. This tight integration of the ghost and the machine, where the latter is controlled by the former through thought alone is what will possibly allow carbon-based human intelligence to hold its own against the pure silicon-based artificial intelligence that threatens to swamp it.

Controlling devices and machinery with thoughts, or rather the mind, is really not new. The concept has moved from mythology, through science fiction and has been actually realised and implemented in wheelchairs for paraplegic patients. These are unfortunate people who are paralysed from their neck downwards and there is really no cure for this condition. In the last 10 years, engineers have acquired the expertise to use electroencephalography (EEG) techniques to sense electrical signals in the brain and use this information to guide wheelchairs as per their desires and requirements. This simple google search “http://bit.ly/eeg-wheelchair” will show the range and diversity of EEG wheelchair products, including some do-it-yourself ones for amateurs that are readily available or can be constructed.

But to be effective, the EEG has to be unpleasantly intrusive. Initially, implants had to be inserted through holes drilled into the cranium and connected to neurons. With time and technology, this has been simplified to the point where special purpose caps with metallic probes worn tightly on the head could also be used to serve the same purpose.

In fact, some of these caps are also being used as input devices for video games and can be used to control the game. Nevertheless, the unwieldy nature of the interface makes it rather difficult to use unless one was really, really desperate to use the technology as in the case of a paraplegic. This is where the whole new technology of electromyography (EMG) has made a significant breakthrough.

The key idea behind this amazing new technique is that while the brain and the nervous system may be the origin of all our thoughts and desires, the information about the same is communicated to the external world — and devices — through motor control.

When we speak, throat muscles move. When we type letters on a keyboard, move a pencil on paper or operate a switch, the muscles of our hands move. When we are happy or sad, the muscles of our face change to reflect a smile or a frown. So, the information on the intent or the desire that lies deep inside the brain and could only be accessed with EEG implants, is now accessible far more easily by something as simple as a wristband!

The CTRL-Kit is one such device. Designed by a neuroscience company CTRL-Lab that is led by former Microsoft engineer Thomas Reardon, the CTRL-Kit looks like a heavy bracelet with spikes that is often worn by gangsters and their henchmen. But instead of hurting an enemy, the spikes in this bracelet press into the wearer’s skin and can pick up the electrical voltage in the motor muscles of the hand.

Two simple demonstrations show the immense potential of this technology. In the first, a person starts typing on a normal keyboard and the letters appear on the screen. Then the keyboard is removed and the person is basically drumming the table with the intention to type and the letters continue to appear on the screen. Finally, even the motion of the fingers is stopped and the mere desire or intention to type a letter is picked up from the minute twitch in the muscle and reflected as a letter on the screen. In the second demo, the movement of a person’s hand is sensed and shown as a corresponding “virtual” hand on the screen.

Obviously, the virtual hand on the screen can be replaced with a physical, mechanical robotic hand, if necessary. Making the virtual hand replicate the movement of the human hand is not difficult. One can move a finger or make a fist and the virtual hand will do the same. The magic happens when the human does not actually move the finger, but only desires or wishes to. That is when the software takes over and by, not just detecting, but interpreting the pattern of electrical voltage on the motor muscles of the wrist, it makes the virtual hand move a finger even though the human hand did not move it.

Talking of the shift from motion to words, AlterEgo is an earphone and headset combination developed by Arnav Kapur at the MIT Media Lab that focusses on the muscles around the face and the jaw. When a person speaks, a normal microphone is designed to pick up the physical vibrations or movements of the air or the bones, but AlterEgo is different.

Instead of sensing movement, it senses the voltage level in the muscles that cause movement and as a result, it can pick out words that the user is silently articulating or “saying in his head”. Even when a person is only reading a text, the muscles around the head and the face have quick, imperceptible movements that “sound” out the words that he actually “hears” in his head. This process is known as sub-vocalisation and is the key with which AlterEgo unlocks what is going in and out of the brain when one is trying to speak.

Both CTRL-Kit and AlterEgo are a part of an ever-expanding family of wearable devices that pick up signals emanating from the brain, either through traditional EEG or the more user-friendly EMG technique and interpret them to decode what the wearer desires or intends to do or say.

But this interpretation and decoding process still uses the good “old fashioned” AI techniques such as artificial neural networks. Scanning through a blizzard of neurosignals, whether from EEG or EMG, and interpreting it as a specific word or movement is really no different from the kind of AI research that goes into autonomous vehicle control or face recognition, and this is perhaps going to be the biggest application of AI techniques in the years ahead.

Popular perception posits AI — and robots that it controls — as competitors to humans and native intelligence, but going forward, it is more likely than not that the two will cooperate to complete tasks that each one has difficulty in doing on its own. Structurally, or qualitatively, it is no different from a crane operator lifting a 100-tonne load or a pilot flying an aircraft at the speed of sound, but operationally, there will be a far higher degree of integration between man and machine. In effect, this means that man will now be able to evolve into a superman — with enhanced physical and mental powers.

India has already missed the bus on traditional AI. Unlike Google, Facebook, Amazon or even Alibaba and Baidu, there is no Indian company that has the volume and variety of data with which it can create the kind of AI models that shock-and-awe us with their incredible sophistication. Flipkart might have succeeded, but like in most Indian businesses, their owners were more interested in selling out to Walmart and enjoy their hard won cash.

Now, it is almost impossible, in the winner-takes-all scenario of the internet, that any new startup that is based on social data will be able to scale up to achieve anything significant. Man-machine interactions on the other hand, offer a very significant but niche area where Indian entrepreneurship can still get a toe-hold by leveraging the knowledge that is latent and currently languishing in our public institutions such as the Indian Institutes of Technology (IITs) and the All-India Institutes of Medical Sciences (AIIMs).

Like TeamIndus, that made a valiant, but eventually unsuccessful, private effort to plant the Tricolour on the Moon, we need a private initiative to focus on and crack this technology. This may be the only way that India can, not just hop on to, but actually get to drive the next big technology bus.

The Ghost trapped in the biological Machine must be liberated so that the Ghost and the digital Machine, the carbon intelligence and the silicon intelligence, can work together. This is how we will walk towards a new future at the next level of the ladder of human evolution.

Prithwis Mukerjee is an engineer by education, a teacher by profession, a programmer by passion and an imagineer by intention. He has recently published an Indic themed science fiction novel, Chronotantra.