Science

Are We Close To Realising A Quantum Computer? Yes And No, Quantum Style

Karan Kamble

Sep 13, 2020, 11:36 AM | Updated Sep 14, 2020, 09:01 AM IST

Save & read from anywhere!

Bookmark stories for easy access on any device or the Swarajya app.

Scientists have been hard at work to get a new kind of computer going for about a couple of decades. This new variety is not a simple upgrade over what you and I use every day. It is different. They call it a “quantum computer”.

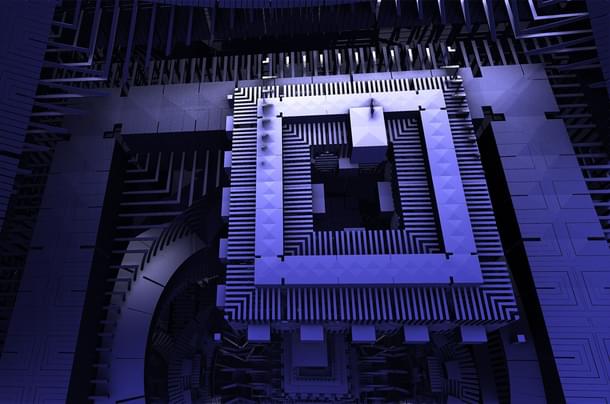

The name doesn’t leave much to the imagination. It is a machine based on the central tenets of the most successful theory of physics yet devised — quantum mechanics. And since it is based on such a powerful theory, it promises to be so advanced that a conventional computer, the one we know and recognise, cannot keep up with it.

Think of the complex real-world problems that are hard to solve and it’s likely that quantum computers will throw up answers to them someday. Examples include simulating complex molecules to design new materials, making better forecasts for weather, earthquakes or volcanoes, map out the reaches of the universe, and, yes, demystify quantum mechanics itself.

“One of the major goals of quantum computers is to simulate a quantum system. It is probably the reason why quantum computation is becoming a major reality,” says Dr Arindam Ghosh, professor at the Department of Physics, Indian Institute of Science.

Given that the quantum computer is full of promise, and work on it has been underway for decades, it’s fair to ask — do we have one yet?

“This is a million-dollar question, and there is no simple answer to it,” says Dr Rajamani Vijayaraghavan, the head of the Quantum Measurement and Control Laboratory at the Tata Institute of Fundamental Research (TIFR). “Depending on how you view it, we already have a quantum computer, or we will have one in the future if the aim is to have one that is practical or commercial in nature.”

We have it and don’t. That sounds about quantum.

In the United States, Google has been setting new benchmarks in quantum computing.

Last year, in October, it declared “quantum supremacy” — a demonstration of a quantum computer’s superiority over its classical counterpart. Google’s Sycamore processor took 200 seconds to make a calculation that, the company claims, would have taken 10,000 years on the world’s most powerful supercomputer.

This accomplishment came with conditions attached. IBM, whose supercomputer “Summit” (the world’s fastest) came second-best to Sycamore, contested the 10,000-year claim and said that the calculation would have instead taken two and a half days with a tweak to how the supercomputer approached the task.

Some experts suggested that the nature of the task, generating random numbers in a quantum way, was not particularly suited to the classical machine. Besides, Google’s quantum processor didn’t dabble in a real-world application.

Yet, Google was on to something. For even the harsh critic, it provided a glimpse of the spectacular processing power of a quantum computer and what’s possible down the road.

Google did one better recently. They simulated a chemical reaction on their quantum computer — the rearrangement of hydrogen atoms around nitrogen atoms in a diazene molecule (nitrogen hydride or N2H2).

The reaction was a simple one, but it opened the doors to simulating more complex molecules in the future — an eager expectation from a quantum computer.

But how do we get there? That would require scaling up the system. More precisely, the number of “qubits” in the machine would have to increase.

Short for “quantum bits,” qubits are the basic building blocks of quantum computers. They are equivalent to the classical binary bits, zero and one, but with an important difference. While the classical bits can assume states of zero or one, quantum bits can accommodate both zero and one at the same time — a principle in quantum mechanics called superposition.

Similarly, quantum bits can be ‘entangled’. That is when two qubits in superposition are bound in such a way that one dictates the state of the other. It is what Albert Einstein in his lifetime described, and dismissed, as “spooky action at a distance”.

Qubits in these counterintuitive states are what allow a quantum computer to work its magic.

Presently, the most qubits, 72, are found on a Google device. The Sycamore processor, the Google chip behind the simulation of a chemical reaction, has a 53-qubit configuration. IBM has 53 qubits too, and Intel has 49. Some of the academic labs working with quantum computing technology, such as the one at Harvard, have about 40-50 qubits. In China, researchers say they are on course to develop a 60-qubit quantum computing system within this year.

The grouping is evident. The convergence is, more or less, around 50-60 qubits. That puts us in an interesting place. “About 50 qubits can be considered the breakeven point — the one where the classical computer struggles to keep up with its quantum counterpart,” says Dr Vijayaraghavan.

It is generally acknowledged that once qubits rise to about 100, the classical computer gets left behind entirely. That stage is not far away. According to Dr Ghosh of IISc, the rate of qubit increase is today faster than the development of electronics in the early days.

“Over the next couple of years, we can get to 100-200 qubits,” Dr Vijayaraghavan says.

A few more years later, we could possibly reach 300 qubits. For a perspective on how high that is, this is what Harvard Quantum Initiative co-director Mikhail Lukin has said about such a machine: “If you had a system of 300 qubits, you could store and process more bits of information than the number of particles in the universe.”

In Indian labs, we are working with much fewer qubits. There is some catching up to do. Typically, India is slow to get off the blocks to pursue frontier research. But the good news is that over the years, the pace is picking up, especially in the quantum area.

At TIFR, researchers have developed a unique three-qubit “trimon” quantum processor. Three qubits might seem small in comparison to examples cited earlier, but together they pack a punch. “We have shown that for certain types of algorithms, our three-qubit processor does better than the IBM machine. It turns out that some gate operations are more efficient on our system than the IBM one,” says Dr Vijayaraghavan.

The special ingredient of the trimon processor is three well-connected qubits rather than three individual qubits — a subtle but important difference.

Dr Vijayaraghavan plans to build more of these trimon quantum processors going forward, hoping that the advantages of a single trimon system spill over on to the larger machines.

TIFR is simultaneously developing a conventional seven-qubit transmon (as opposed to trimon) system. It is expected to be ready in about one and a half years.

About a thousand kilometres south, at IISc, two labs under the Department of Instrumentation and Applied Physics are developing quantum processors too, with allied research underway in the Departments of Computer Science and Automation, and Physics, as well as the Centre for Nano Science and Engineering.

IISc plans to develop an eight-qubit superconducting processor within three years.

“Once we have the know-how to build a working eight-qubit processor, scaling it up to tens of qubits in the future is easier, as it is then a matter of engineering progression,” says Dr Ghosh, who is associated with the Quantum Materials and Devices Group at IISc.

It is not hard to imagine India catching up with the more advanced players in the quantum field this decade. The key is to not think of India building the “biggest” or the “best” machine — it is not necessary that they have the most number of qubits. Little scientific breakthroughs that have the power to move the quantum dial decisively forward can come from any lab in India.

Zooming out to a global point of view, the trajectory of quantum computing is hazy beyond a few years. We have been talking about qubits in the hundreds, but, to have commercial relevance, a quantum computer needs to have lakhs of qubits in its armoury. That is the challenge, and a mighty big one.

It isn’t even the case that simply piling up qubits will do the job. As the number of qubits go up in a system, it needs to be ensured that they are stable, highly connected, and error-free. This is because qubits cannot hang on to their quantum states in the event of environmental “noise” such as heat or stray atoms or molecules. In fact, that is the reason quantum computers are operated at temperatures in the range of a few millikelvin to a kelvin. The slightest disturbance can knock the qubits off their quantum states of superposition and entanglement, leaving them to operate as classical bits.

If you are trying to simulate a quantum system, that’s no good.

For that reason, even if the qubits are few, quantum computation can work well if the qubits are highly connected and error-free.

Companies like Honeywell and IBM are, therefore, looking beyond the number of qubits and instead eyeing a parameter called quantum volume.

Honeywell claimed earlier this year that they had the “world’s highest performing quantum computer” on the basis of quantum volume, even though it had just six qubits.

Dr Ghosh says quantum volume is indeed an important metric. “Number of qubits alone is not the benchmark. You do need enough of them to do meaningful computation, but you need to look at quantum volume, which measures the length and complexity of quantum circuits. The higher the quantum volume, the higher is the potential for solving real-world problems.”

It comes down to error correction. Dr Vijayaraghavan says none of the big quantum machines in the US today use error-correction technology. If that can be demonstrated over the next five years, it would count as a real breakthrough, he says.

Guarding the system against “faults” or "errors" is the focus of researchers now as they look to scale up the qubits in a system. Developing a system with hundreds of thousands of qubits without correcting for errors cancels the benefits of a quantum computer.

As is the case with any research in the frontier areas, progress will have to accompany scientific breakthroughs across several different fields, from software to physics to materials science and engineering.

In light of that, collaboration between academia and industry is going to play a major role going forward. Depending on each of their strengths, academic labs can focus on supplying the core expertise necessary to get a quantum computer going while the industry can provide the engineering muscle to build the intricate stuff. Both are important parts of the quantum computing puzzle. At the end of the day, the quantum part of a quantum computer is tiny. Most of the machine is high-end electronics. The industry can support that.

It is useful to recall at this point that even our conventional computers took decades to develop, starting from the first transistor in 1947 to the first microprocessor in 1971. The computers that we use today would be unrecognisable to people in the 1970s. In the same way, how quantum computing in the future, say, 20 years down the line, is unknown to us today.

However, governments around the world, including India, are putting their weight behind the development of quantum technology. It is clear to see why. Hopefully, this decade can be the springboard that launches quantum computing higher than ever before. All signs point to it.

Karan Kamble writes on science and technology. He occasionally wears the hat of a video anchor for Swarajya's online video programmes.